Save time with reliable and digestible research summarization

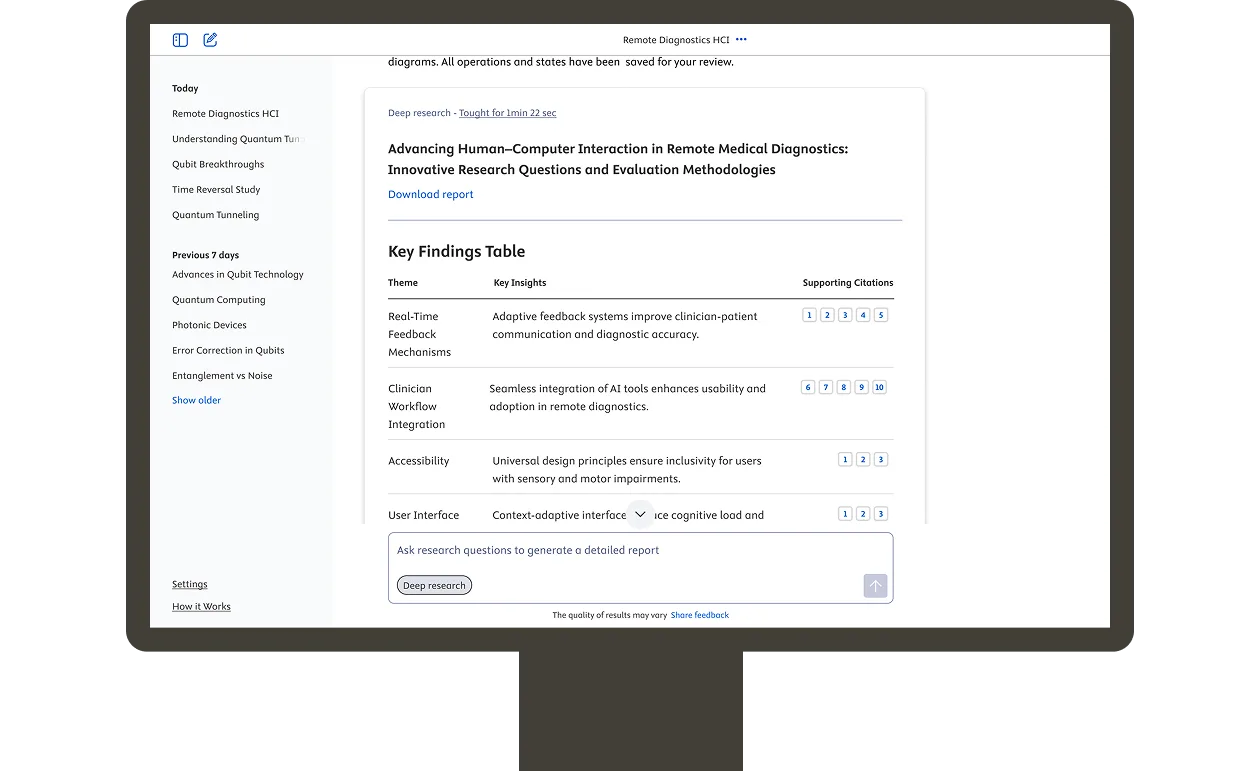

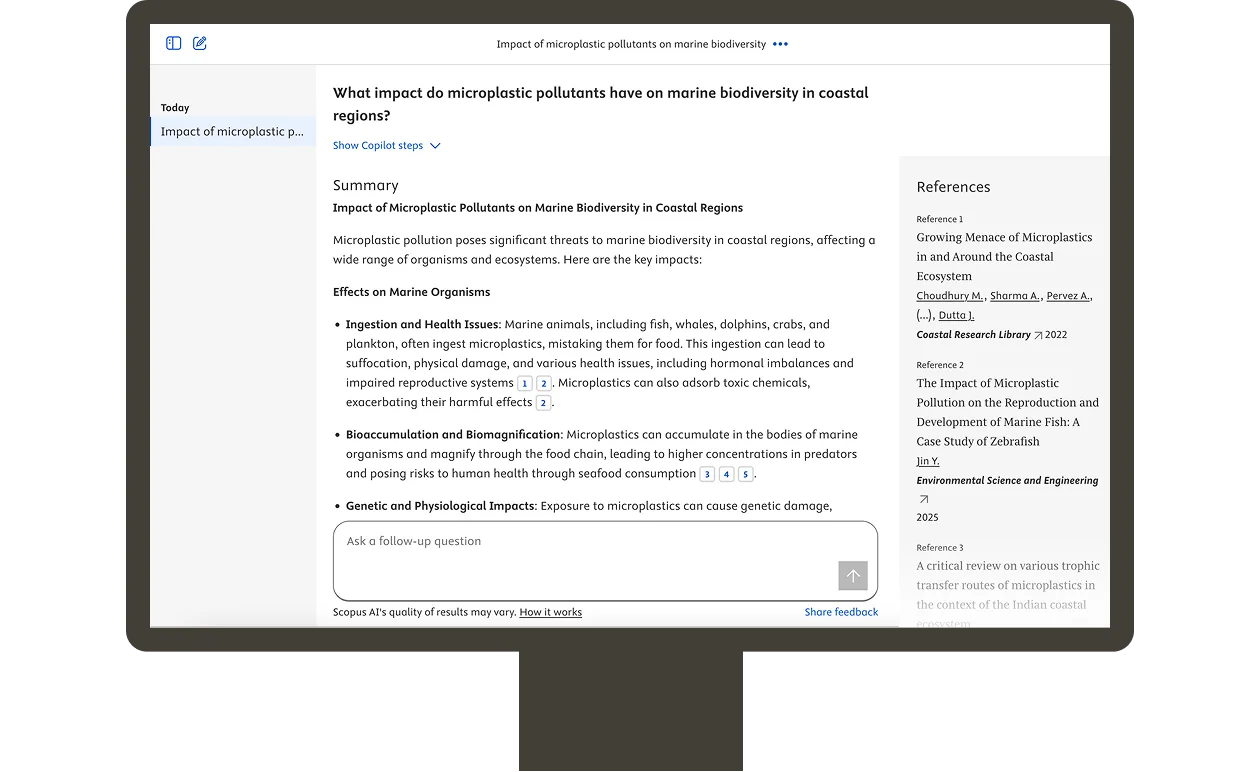

Type a query into AI Discovery in the words, format and language of your choice. AI Discovery then sources and uses relevant Scopus content to generate a Topic summary and an Expanded summary.

Each response references the sources used and indicates the tool's confidence in relevance. If it can’t find sufficient evidence, our strict prompt engineering instructs AI Discovery to tell you and suggest alternative queries—greatly reducing the risk of hallucinations.

Conversational history provides an overview of all the topics you've previously explored, allowing you to revisit key insights anytime and resume queries where you left off.