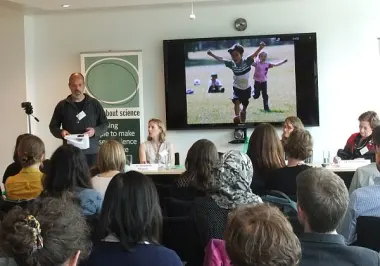

Securing and implementing a transformative agreement: Perspectives from library leaders

Explore how library leaders finalize an agreement’s terms and ensure a successful implementation of a transformative agreements that supports their strategic goals.